Story

Urban Digital Twins hold promise for spatial planning, but most Urban Digital Twins remain underutilised, misunderstood and misinterpreted. With the increasing amount of data sources, their complex interfaces create capability barriers for stakeholders, limiting the return on substantial public investment. SmartwayZ.NL (Province of North Brabant) aimed to understand how to make spatial data in Urban Digital Twins more accessible to a broader range of users, not just technical specialists. The project builds on the foundation of innovative projects like Digital Urban Brabant, DIGIREAL and VHESPER, and is part of a long-term strategic triple helix collaboration between SmartwayZ.NL, Cradle, and Argaleo. HUMBOLDT was, therefore, born from a straightforward idea:

What if you could simply talk to a Digital Twin?

Answering this question required combining Cradle’s extensive expertise in creating realistic, expressive Virtual Humans with validated spatial data from the Province of North Brabant. This data covers demographics, household composition, migration backgrounds, rail accessibility, and urban mobility research across hundreds of neighbourhoods in Brabant. From this convergence, the following technical pillars emerged:

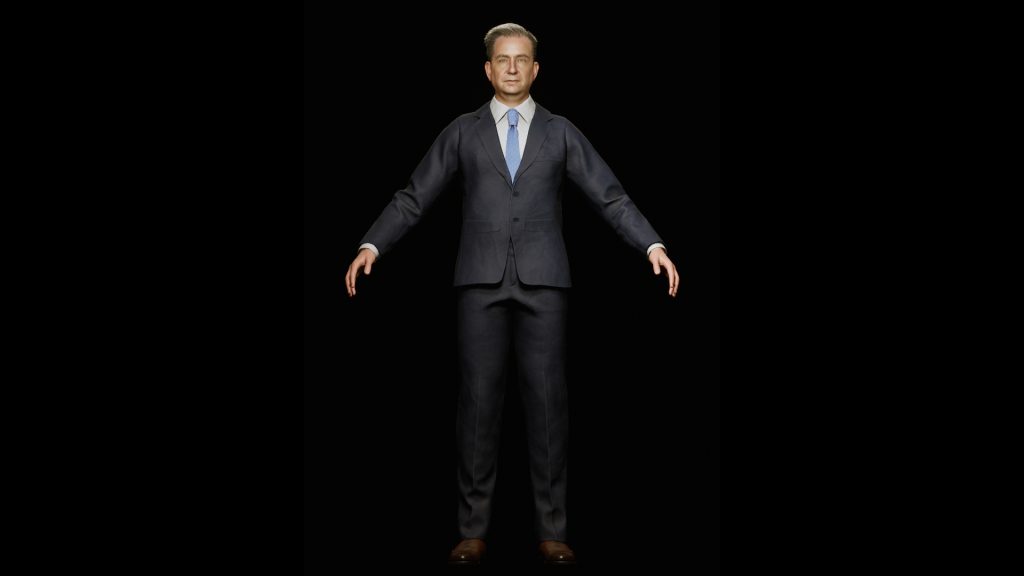

Photogrammetry of the client: A digital replica of the physical appearance of Dr. Joost de Kruijf, captured through a dedicated photogrammetry and motion capture session, used to create a personalised Virtual Human with real-time lip-sync and emotional expression capabilities.

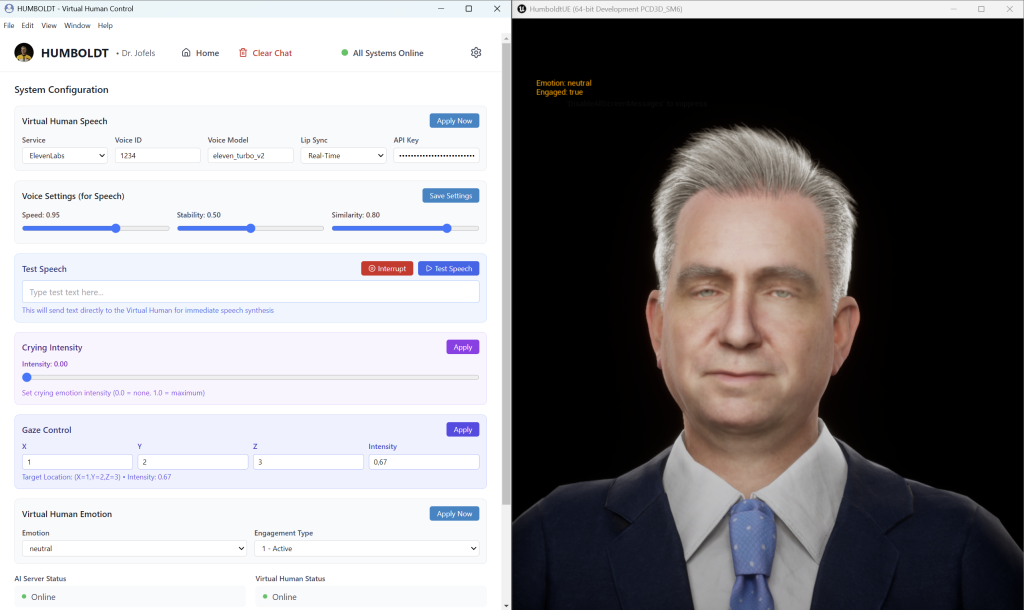

AI-generated professional audio: A cloned voice of Dr. Joost de Kruijf, generated through ElevenLabs’ Professional Voice Clone pipeline, enabling the Virtual Human to speak with an authentic, recognisable voice.

AI models for content generation on urban mobility data: A self-hosted Retrieval-Augmented Generation pipeline grounded in validated spatial datasets from CBS and Argaleo, ensuring every answer Dr. Jofels provides is factual, locally aware, and traceable to its source data.

Results

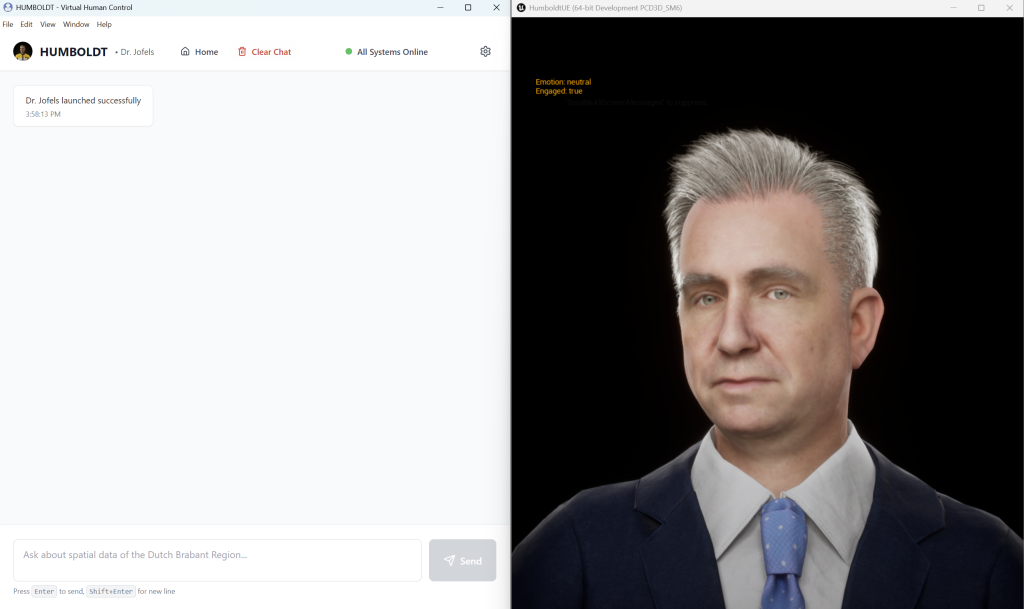

HUMBOLDT successfully delivered a working proof of concept integrating all three pillars: voice, appearance, and intelligence, into a single conversational Virtual Human, packaged as a desktop application. The system demonstrated the ability to answer natural language questions about Brabant’s neighbourhoods, municipalities, and regional demographics, as well as domain-specific mobility research questions on modal splits and cycling willingness.

Rather than navigating dashboards or querying databases manually, a user asks Dr. Jofels questions in plain language, such as “Which municipalities in Brabant have the highest share of elderly residents?” or “How is rail accessibility by bike in this neighbourhood?” A Virtual Human with a hyper-realistic appearance based on a real persona (Dr. Joost de Kruijf), and speaking in their cloned voice, answers with data-grounded, conversational responses.

The proof of concept was publicly unveiled at the SmartwayZ.NL Networking Dinner in January 2026, where Dr. Jofels was demonstrated live to an audience of policymakers, industry representatives, and knowledge institutes. It serves as the foundation for future scalable applications across urban planning, mobility ecosystems, and Digital Twin accessibility, a transferable innovation applicable to other Urban Digital Twins and regions throughout the Netherlands and beyond.

Technical details

At the heart of HUMBOLDT is Dr. Jofels, a conversational Virtual Human alter ego based on the image and voice of Dr. Joost de Kruijf. Creating Dr. Jofels involved three parallel workstreams: visual appearance, voice and intelligence.

For the visual side, photogrammetry of the client was used to capture facial geometry and texture, serving as the foundation for a custom virtual human built in MetaHuman in Unreal Engine. A photogrammetry scan of Joost’s hair was also created and used as a base reference to manually build a custom hair groom in Houdini that matched his likeness. The MetaHuman was then integrated with Cradle’s custom modules that add the subtle details which make interactions feel human, enabling it to react and speak in real time – emotional expression, eye movement, and lip-sync.

For the voice, we recorded 2.5 hours of speech from the client, both scripted and conversational, which was used to train a professional voice clone through ElevenLabs. Several voice models were tested, and the client chose the one that sounded most like them. When Dr. Jofels speaks, the generated audio drives the avatar’s lip movements in real time.

In conversation, the system uses a retrieval-augmented generation (RAG) approach: rather than relying on a general-purpose AI, questions are matched against a curated knowledge base built from validated CBS neighbourhood data, Argaleo mobility research, and scientific articles. This ensures answers are always grounded in real, traceable data. The whole system, chat interface, AI server, and Virtual Human, runs as a desktop application with a modular architecture, making it straightforward to swap in different data sources or AI models in the future.

Tech stack: Unreal Engine 5.7 · MetaHuman · RealityCapture · Houdini · ZBrush · Substance Painter · XNormal · ElevenLabs PVC · LLaMA-3 · Sentence-Transformers · Electron (React + TypeScript) · FastAPI · Docker