Story

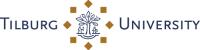

Despite considerable improvements in recent years, Mid-Brabant continues to rank among the lower-scoring Dutch regions on Broad Prosperity indicators, including health, education, and social participation. New technologies such as generative AI and immersive media offer promising avenues to address these challenges – yet they also carry risks. Artificial Intelligence is reshaping work, learning, and care at a rapid pace, and without deliberate regional experimentation and capacity building, this transition may deepen rather than close existing social divides. Virtual humans represent a particularly compelling methodology in this context. They hold the potential to support lifelong learning, empowerment, and accessible guidance for citizens who currently lack access to professional support, but only if designed with care and contextual understanding.

Within MindLabs, partners already leverage AI and immersive technology to develop, apply, and evaluate interventions addressing societal challenges (e.g., Project VIBE). Building on this pioneering work, the consortium sees an urgent opportunity to advance virtual human applications that promote inclusion, health, and empowerment, embedding them in the Tilburg Innovation District for lasting societal and economic benefit.

This project addresses a critical gap: the absence of inclusive, evidence-based methods for deploying AI-driven virtual humans in social domains. The end goal is to advance scientific and applied understanding of virtual humans as a tool for Broad Prosperity, by:

- Developing and testing empathic, multimodal virtual humans capable of recognizing emotional signals and responding appropriately.

- Using these virtual humans to support individuals and communities in domains such as health promotion, education, and media literacy.

- Evaluating how such interactions can foster trust, engagement, learning, and social cohesion, while ensuring ethical, transparent, and inclusive adoption.

Results

Cradle contributes applied R&D on virtual human development, translating research into demonstrators that can be tested with users and partners in real contexts across education, healthcare, and media. Through its activities, BUas pursues the following goals:

- Visual Realism: Understand the effect of hyper-realistic visual portrayals of virtual humans on trust, likeability, and attention span.

- Dynamic Expressions: Understand the effect of dynamic versus static expressions on the perception of and reaction to emotions exhibited by virtual humans (see Project VHESPER for more information).

- Non-verbal Mimicry: Understand the effect of non-verbal mimicry (e.g., gestures, posture, facial expressions) performed by virtual humans on the level of engagement, trust, and connection experienced by natural humans.

- Voice and Dialogue: Understand the effect of more realistic voices, intonations, emotional loading and turn-taking on perceived trust, authenticity, and attention span in conversations with a virtual human.

- Multimodal Data: Explore how the integration of multimodal training data (large language models, curated documents, and sensor data) can be leveraged to enable richer and more meaningful human–AI interactions.

- Cross-Domain Realism: Understand how the degree of realism in virtual humans influences user experiences and behaviors across diverse application domains (health, journalism, entertainment, training, and education).

The main project outputs BUas has delivered so far feature:

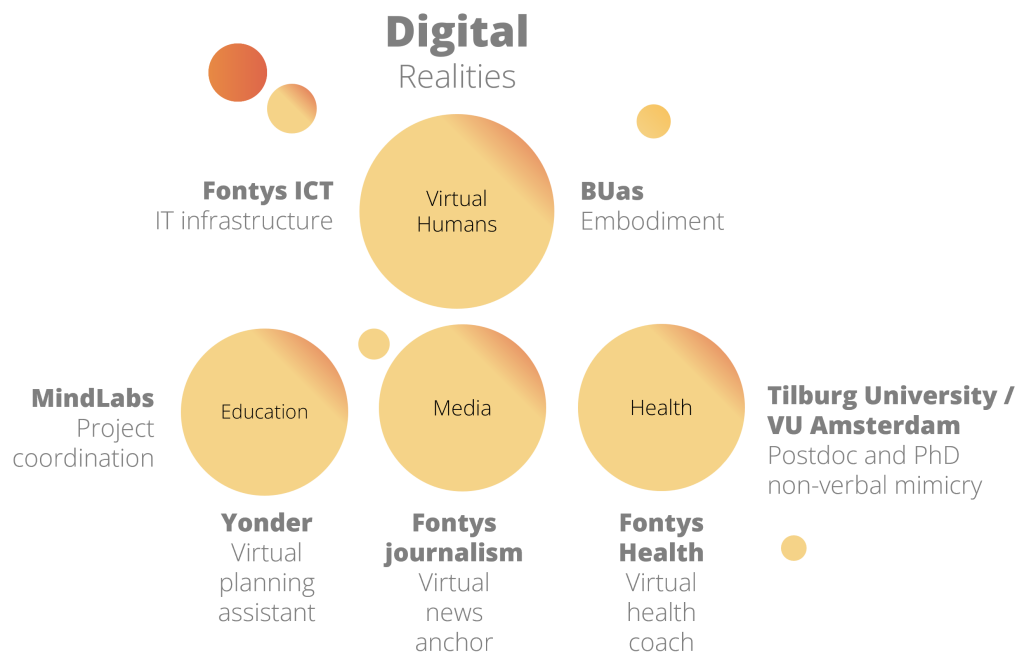

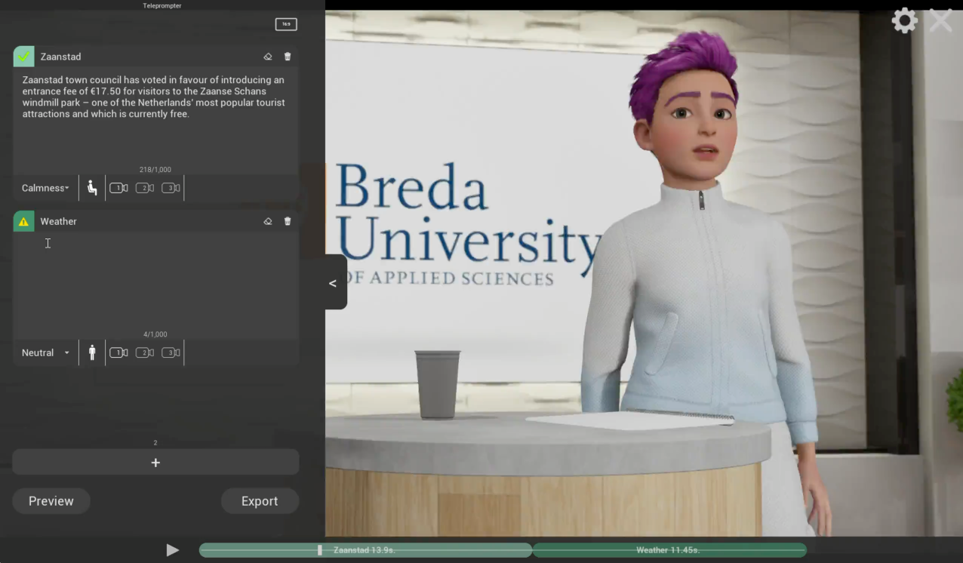

AI-driven digital news anchor prototype: A virtual human news anchor was developed to read news items using AI-generated voice and automatically produce short-form video content for social media. The prototype was built in Unreal Engine 5.4 with the MetaHuman plugin and Azure AI Speech for lip-synced voice synthesis. The prototype places the virtual human in a virtual TV studio environment with configurable lighting, camera angles, and set design. Users input dialogue, assign emotional tones per segment, and generate a fully animated, lip-synced performance that can be exported as a video file. The goal is to explore the believability and acceptability of news presented by a virtual human, serving as a testbed for a journalism and media literacy use case.

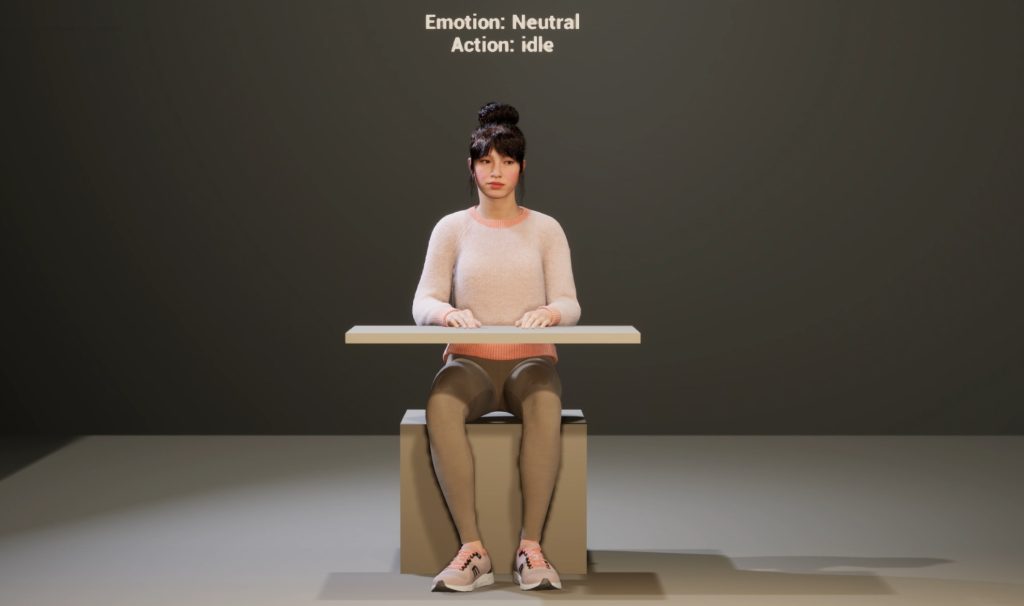

Virtual human for an experiment on The Roles of Mimicry and Synchrony in Virtual Human–Mediated Emotion Regulation: An interactive virtual human, Hana, was developed for an interactive virtual human experiment led by VU Amsterdam, using a 2×2 within-subject factorial design (mimicry on/off × synchrony on/off) to unpack the distinct roles of mimicry and synchrony in emotion regulation and bonding. Hana acts as a conversational coach across four emotionally themed topics: academic stress, loneliness, emotional exhaustion, and uncertainty about the future. During listening phases, Hana’s behaviour is determined by the assigned condition: randomised movements, mimicry only (replicating user gestures with a 3–5 s delay), synchrony only (responding to detected movement in the opposite body region within 0–3 s), or mimicry combined with synchrony (replication within 0–3 s). User movements are captured via webcam, with gesture onset, speech segments, and condition data logged for behavioural and statistical analysis.

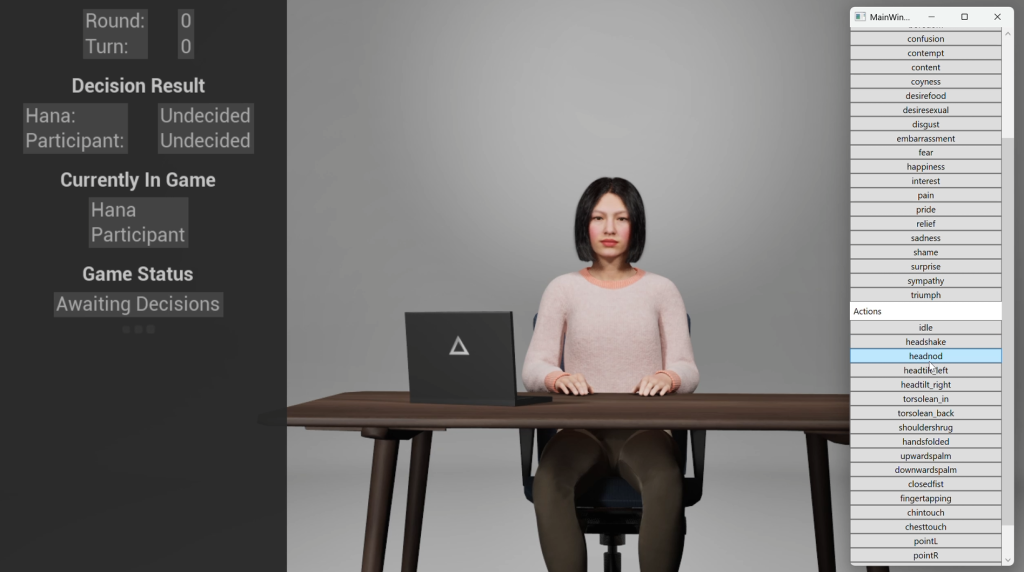

Virtual human for an experiment on Interpersonal Outcomes, Non-verbal Mimicry, and Task Performance in Human – Virtual Human Interaction: Tilburg University is running an experiment to study how non-verbal mimicry by a virtual human affects social outcomes and perceived interaction quality, taking into account participant gender. Participants play three rounds of the board game Diamant against the virtual human Hana, displayed seated at a table with a laptop to obscure the lower body and maintain realism.

Cradle’s contributions to this experiment cover both the visual design and technical integration. On the visual side, Cradle configured Hana with realistic idle behaviour (neutral expressions, blinking, and subtle breathing) and built out a full mimicry system capable of displaying behavioural mimicry (head posture in pitch, yaw, and roll; head nodding), facial mimicry (smile, frown), and emotional mimicry (anger, disgust, fear, joy, sadness, surprise), all at a calibrated intensity to remain noticeable without undermining realism. On the technical side, Cradle integrated the researcher’s real-time signal detection models into the virtual human’s backend, so that detected user signals directly trigger the corresponding mimicry responses. Cradle also built the game decision display, showing both the participant’s and Hana’s choices (stay or leave) on screen, and programmed variable decision timing for Hana to simulate realistic deliberation. Outcomes measured include anthropomorphism, likability, rapport, trust, warmth, and overall interaction quality.

Other impressions

A MoCap session for the development of emotional virtual humans:

Realistic versus animated news anchor:

Welcome to MAI house – Installation for Dutch Design Week 2024:

Technical Details

Tech stack:

ZBrush · Houdini · Unreal Engine (MetaHuman Creator) · Substance Painter · xNormal · Adobe Photoshop · Azure · Maya · MotionBuilder · Marvelous Designer